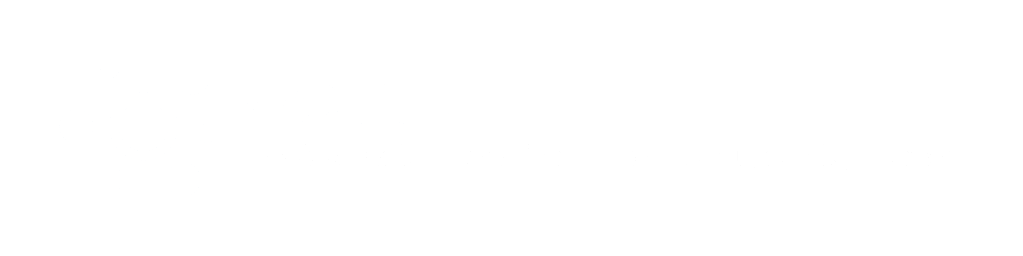

Deploying a machine learning model to production is where most AI projects fail – not because the model is bad, but because the infrastructure around it isn’t ready. MLOps (Machine Learning Operations) is the discipline that bridges the gap between model development and reliable production systems. In this guide, we cover the complete MLOps machine learning production lifecycle: from data pipelines to model monitoring.

What is MLOps Machine Learning Production?

MLOps applies DevOps principles – automation, versioning, and continuous delivery – to machine learning workflows. Without MLOps, teams face “model rot”: a model trained on historical data slowly degrades as real-world data drifts away from the training distribution.

Key benefits of a mature MLOps practice:

- Faster model deployment (days instead of weeks)

- Reproducible experiments – any model can be retrained from scratch

- Continuous monitoring – catch performance degradation before users do

- Audit trails – critical for GDPR and EU AI Act compliance

The MLOps Machine Learning Production Lifecycle

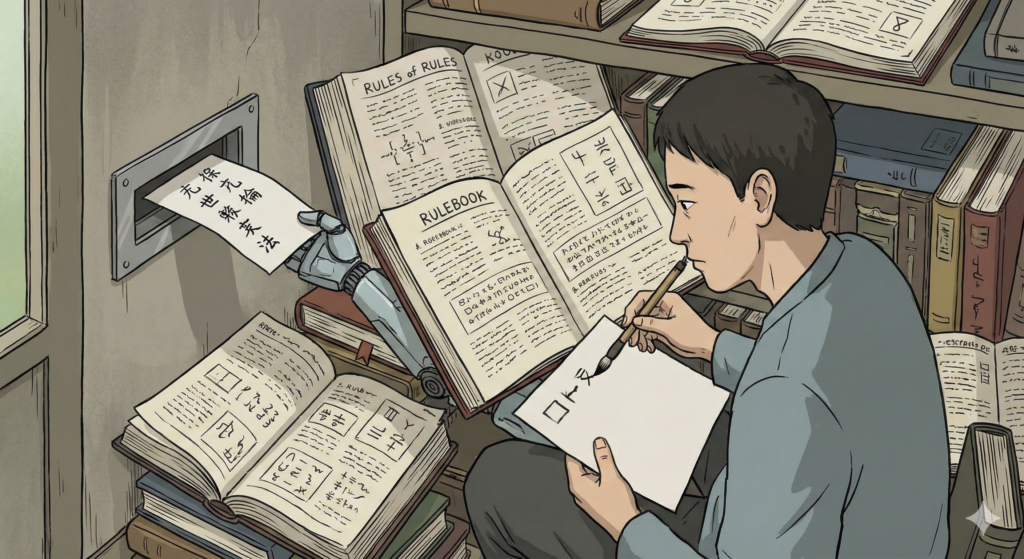

1. Data Ingestion and Versioning

Every ML model is only as good as the data it was trained on. The first step is building reliable data pipelines that:

- Pull data from multiple sources (databases, APIs, data lakes)

- Validate schema and data quality automatically

- Version datasets – so you can reproduce any historical model

Tools: Apache Airflow, dbt, DVC (Data Version Control)

2. Model Training and Experiment Tracking

Training is iterative. You’ll run dozens of experiments with different hyperparameters, features, and architectures. Without tracking, you lose what worked.

Best practices:

- Log every experiment: parameters, metrics, artifacts

- Use a model registry to version trained models

- Never overwrite a production model – always version it

Tools: MLflow, Weights & Biases (W&B), Neptune.ai

3. ML Model Deployment to Production with CI/CD

Deploying an ML model means packaging it as a service that accepts requests and returns predictions. Options include:

- REST API (FastAPI + Docker) – most common for web integrations

- Batch scoring – for nightly reports or large dataset processing

- Edge deployment – for IoT or mobile (TensorFlow Lite, ONNX)

For enterprise environments, model deployment should be automated via a CI/CD pipeline – every approved model version is automatically containerized and deployed to staging, then production.

Tools: BentoML, Seldon Core, AWS SageMaker, Azure ML

4. Monitoring and Retraining

A deployed model is not “done.” You need to track:

- Prediction drift – is the output distribution shifting?

- Data drift – is incoming data diverging from training data?

- Business metrics – is the model still driving the KPIs it was built for?

When drift is detected, trigger automated retraining pipelines to keep the model current.

Tools: Evidently AI, Grafana, Prometheus, Arize AI

MLOps Tools Comparison

| Tool | Category | Best For |

|---|---|---|

| MLflow | Experiment tracking | Open-source, any cloud |

| DVC | Data versioning | Git-based workflows |

| BentoML | Model serving | REST API deployment |

| Evidently AI | Monitoring | Data & prediction drift |

| Kubernetes | Orchestration | Scalable inference |

| Apache Airflow | Pipelines | Complex data workflows |

Common MLOps Mistakes

- Skipping data validation – bad data silently poisons your model

- No model versioning – you can’t roll back when something breaks

- Manual deployment – slows down iteration and introduces human error

- Monitoring only infrastructure – you must also monitor model quality

- Treating MLOps as “later” – retrofitting pipelines is 3x harder than building them upfront

Enterprise MLOps: Taking Machine Learning to Production at Scale

For companies integrating ML into existing Java or .NET backends, MLOps adds an additional challenge: bridging the data science world (Python) with production systems.

At Yotec, we typically:

- Build Python-based ML services exposed as REST APIs

- Integrate them with enterprise .NET or Java backends via standard HTTP contracts

- Deploy on Azure or AWS with automated retraining pipelines

- Ensure all data handling complies with GDPR and client security requirements

🚀 Ready to put your ML model into production?

Contact Yotec to discuss your MLOps infrastructure

📖 This article is part of our complete AI & Machine Learning Development Guide

– covering everything from ML architecture to deep learning and AI ethics.

About The Author: Yotec Team

More posts by Yotec Team