Most estimations in projects are done at the beginning of their life cycle. And this does not confuse us until we understand that the estimates are obtained before the requirements are defined, and accordingly before the task itself is studied. Therefore, the assessment is usually done at the wrong time. This process is correctly called not an estimation, but a guessing or a prediction, because every empty spot in the requirements is a guessing game. How much does this uncertainty affect the final assessment results and its quality?

Let’s review several estimation patterns which will allow you to deliver your project within budget and time.

Decomposition

Instead of estimating a large task, it is better to divide it into many small ones, evaluate them, and get the final assessment as a sum of initial assessments. Thus, we kill as many as four birds with one stone:

We better understand the scope of work. To decompose a task, you need to read the requirements. Immediately float strange places. The risk of misinterpreting the requirements is reduced.

During the analysis of a more detailed analysis of requirements, the mental process of knowledge systematization is automatically launched. This reduces the risk of forgetting some part of the work, such as refactoring, testing automation or additional labor costs for laying out and deploying

The result of the decomposition can be used for project management, provided that one tool was used for both processes (this issue is discussed in more detail later in the text).

If you measure the average error of the estimate of each task obtained during decomposition and compare this error with the error of the total assessment, it turns out that the total error is less than the average. In other words, such an assessment is more accurate (closer to the real labor costs). At first glance, this statement is counter-intuitive. How can the final assessment be more accurate if we make a mistake in evaluating each decomposed task? Consider an example. In order to create a new form, you need to a) write code on the backend, b) impose a layout and write code on the frontend, c) test and lay out. Task A was rated for 5 hours, Task B and C for 3 hours each. The total score was 11 hours. In reality, the backend was done in 2 hours, it took 4 on the form, and another 5 were spent on testing and fixing bugs. The total workload was 11 hours. The perfect hit in the assessment. At the same time, the error in estimating task A is 3 hours, task B is 1 hour, and C is 2 hours. The average error is 3 hours. The fact is that the errors of understatement and overstatement compensate each other. The 3 hours saved on the backend compensated for the backlog at 1 and 2 hours during the frontend and testing. Real labor costs are a random variable depending on many factors. If you get sick, then it will be difficult to concentrate and instead of three hours it may take six. Or some unexpected bug will pop up that will have to be searched and corrected all day. Or, on the contrary, it may turn out that instead of writing your component, you can use an existing one, etc. Positive and negative deviations will compensate each other. Thus, the total error will decrease.

Features and Tasks

At the heart of the decomposition we have Feature. A feature is a unit of delivery of functionality that can be put on production independently of others. Sometimes this level is called User Story, but we came to the conclusion that User Story is not always well suited for setting tasks, so we decided to use a more general formulation.

For one feature, one member of the team is responsible. Someone can help him with the implementation, but one person passes to testing. The task is also returned to him for revision. Depending on the organization of the team, this may be a team lead or the developer directly.

Unfortunately, sometimes there are great features. Alone, working on this volume will take a very long time. And a long time will have to test and implement the process of acceptance. Then we change the type of task to epic (Epic). Epic is just a very fat feature. Nothing more epic we do not start. Those. epics may just be big, huge or gigantic. In any case, the epic is sent to the acceptance in parts (features).

In order to evaluate more precisely, features are decomposed into separate subtasks (Task). For example, a feature could be the development of a new CRUD interface. The structure of tasks can look like this: “display a table with data”, “screw filtering and search”, “develop a new component”, “add new tables to the database”. The structure of tasks is usually not at all interesting for business, but it is extremely important for the developer.

Group estimation and poker planning

Programmers are too optimistic about the amount of work. According to various sources, underestimation of the most often varies in the range of 20-30%. However, in groups, the error is reduced. This is due to the best analysis due to different points of view and temperament evaluating.

With the increasing popularity of Agile, the practice of “poker planning” has become most widespread. However, two problems are associated with group evaluation:

- Social pressure

- Time spent

Social pressure

In almost any group, the experience and personal effectiveness of the participants will vary. If the team is a strong team / tech – lead / lead programmer, other members may feel a sense of discomfort and deliberately underestimate ratings: “Well, Vasya can, but what’s worse? I can do that too!”. The reasons may be different: the desire to appear better than it actually is, competitiveness or just conformism. The result is one: group assessment loses all its advantages and becomes individual. Timlid assesses the rest, and the others just give him a favor.

There are several basic recommendations:

- Most of the estimates are understated. Can’t choose between two grades? Take the one that is bigger.

- Not sure about the assessment – throw out the card “?” or with higher number. Perhaps almost never carried by.

- Always compare plan and fact. If you know that you do not fit in two times, let’s estimate two times higher than what you think. Began to overstate? Multiply in mind by one and a half. After several iterations, the quality of your assessments should improve significantly.

Time spent

You know the phrase “Do you want to work? Gather a meeting! ” Not only does one programmer try to predict the future instead of writing code. Now it makes the whole group. In addition, decision making in a group is a much longer process than individual decision making. Thus, group assessment is an extremely costly process. It is worth looking at these costs from the other side. First, in the evaluation process, the group is forced to discuss the requirements. This means that you have to read them. Already not bad. Second, let’s compare these costs with those incurred by the company due to underestimation of the project.

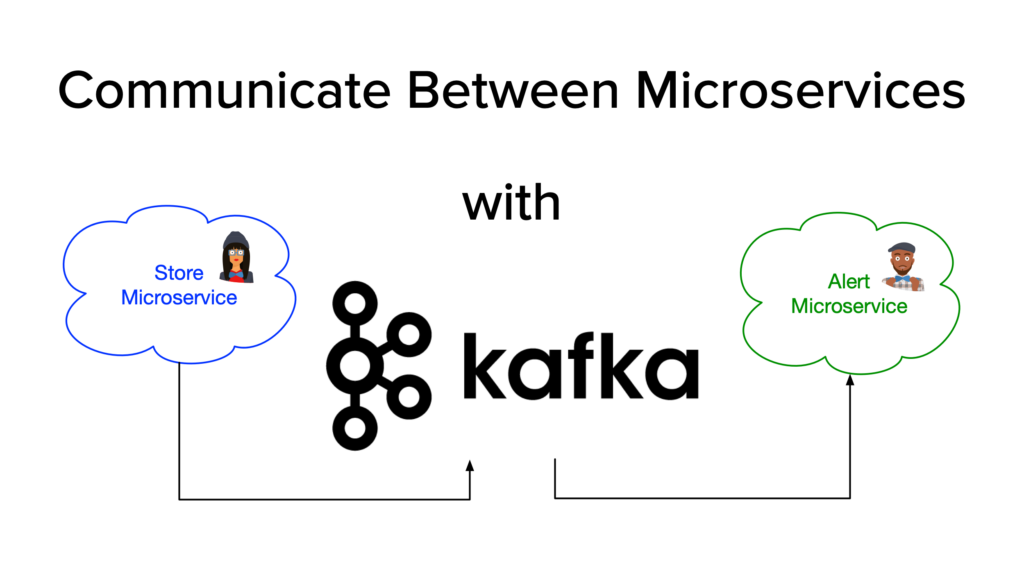

If we consider investment in the assessment as an investment in making the right management decisions, then they no longer seem so expensive. Group size is another matter. Of course, it is not necessary to force the whole team to evaluate the entire scope of work. It is much more reasonable to divide the task by modules, ahem, micro-services and provide autonomy to teams. And at a higher level, use the estimates obtained by each team to draw up a project plan. What smoothly brings us to the topic of the next paragraph.

Dependency, Gantt charts

If estimates are usually given by programmers, then drawing up a project plan is the lot of middle managers. Remember, I wrote that you can help these guys if you use one tool for decomposition and project management. Score and calendar date is not the same. For example, to display a simple table with data you need:

- Table in DB

- Backend code

- Code on the frontend

To perform tasks in this order is easiest from a technical point of view. However, in reality there are different specializations. Frontend specialist may be released earlier. Instead of idle, it is more logical for him to start developing the UI, replacing the request to the server with a mock or hard-coded data. Then, by the time the API is ready, all that remains is to replace the code with a call to the real method … in theory. In practice, the maximum level of parallelism can be achieved as follows:

- First, we quickly do the swagger to match the API specification.

- Then hardcode data on the back or on the front, depending on who is at hand.

- In parallel, we do database, backend and fronted. The database and backend partially block each other, but most often these competencies are combined in one person and the work actually goes sequentially: first, backend, then backend

- We collect everything and test

- Fix bugs and test again

It is important that points 1, 4 and 5 are executed as quickly as possible to reduce the number of locks. In addition to technological limitations and limitations in the availability of specialists of the necessary competence, there are also business priorities! And this means that after three weeks a demonstration was already scheduled for an important client and he didn’t want to care about the first half of your project plan. He wants to see the end result, which will be available no earlier than two months. Well, then you have to prepare a separate plan for this demonstration. We add in the plan to score the necessary data of the database, insert new links for transitions in the UI, etc. It is also desirable that the result should be about 20% percent of the code, and not the whole demonstration.

The artistic cutting of such a plan is not an easy task. Dependency dependency greatly simplifies the process. Before starting the report module, you need to make a data entry module. Is it logical Add a dependency. Repeat for all related tasks. Believe me, many of the dependencies will come as a surprise to you.

The tasks of automating business processes usually result in several long “snakes” of related tasks. With several large blocking nodes. Most often, the original plan is not efficient in terms of resource utilization and / or too long in calendar terms. Revising the estimate of labor costs maybe get faster – not an option. Estimation, so, most likely optimistic. We have to go back to decomposition, look for too long chains and add additional “forks” in order to increase the degree of parallelism. Thus, by increasing the total labor costs (more people work in parallel on one project), the project’s calendar term is reduced. Remember the “mythical man-month”? Compress the plan by more than 30% is unlikely to work. So that the budget and deadline agreed plan may be revised several times. There are several techniques that make the process faster and easier.

Task lock

The first reason for blocking – dependencies – we have already considered. In addition, there may simply be not clear / exact requirements. A tool is needed to block tasks and ask questions. With clarification of requirements, you can unlock tasks and adjust the assessment. This process, by the way, almost always takes place during the project, and not before it.

Critical path, risks ahead

In short, if you mess up with the structure of the database, you have to rewrite the Beck, do not calculate the load, you may have to change the technology altogether. Details about the risks of design work, I wrote in the article “Cost-effective code.” The sooner the risks standing on the critical path materialize, the better. After all, there is still time and something can be done. Even better, if they do not materialize at all, but let’s be realistic.

Therefore, you need to start with the most turbid, difficult and unpleasant tasks, put them in the status “blocked” and clarify, overestimate and remove dependencies wherever possible.

Acceptance criteria, test cases

Natural language: Russian, English or Chinese – it does not matter – it can be both redundant and not accurate. Test cases make it possible to overcome these limitations. In addition, it is a good communication tool between developers, business users and the quality department.

Project management

Do you want to make God laugh? Tell him about your plans. Even if a miracle happened and you collected and clarified all the requirements before starting work, you have enough competent people, the plan allows you to do most of the work in parallel, you are still not immune to employee diseases, errors of assessment and materialization of other risks. Therefore, it is necessary to update the plan on a regular basis and compare it with the fact. And for this, the accounting of working time is important.

Time Tracking

Accounting for working time has long been the de facto standard in the industry. It is highly desirable that it be produced in the same instrument as the evaluation. This allows you to track the deviation of the actual elapsed time from the estimated. Well, if this tool also uses the project manager. Then all delays in the critical path will be immediately noticeable. The variant with different tools is also possible, but it will require much more labor to maintain the process, which means that there will be a temptation to fake. We already know how this ends. We use Jira. Everything about what I wrote in the article is currently available out of the box, although it requires a little tweaking.

Conclusion

- Estimation is difficult

- Decomposition allows you to find gaps in the requirements and improve the quality of assessment

- Group estimates are more specific than individual, use poker

- Blockers, test cases and formal acceptance criteria improve communication, which in turn increases the project’s chances of success.

- It is necessary to begin with the most risky tasks on the critical path of the project.

- Evaluation is not a one-time action, but is an inseparable process from project management.

- Without taking into account working time, it is impossible to keep the status of the project relevant and adjust its estimates.

Related Posts:

5 TIPS TO SUCCESSFULLY MANAGE A REMOTE TEAM

9 popular myths about agile development

How to Effectively Work with International Teams

About The Author: Yotec Team

More posts by Yotec Team