TL;DR: The Core Dilemma

Understanding artificial intelligence remains one of the most pressing questions of our time—and it’s precisely why artificial intelligence understanding is so critical to get right. While Large Language Models (LLMs) can compose poetry and code, they lack semantic grounding. This post explores why mastery of language structure (syntax) is not the same as genuine understanding (semantics) and proposes three new tests for true Artificial General Intelligence (AGI).

Understanding Artificial Intelligence: Why Current Models Fall Short

In recent years, neural networks have captured the public imagination like few technologies before them. They’ve become a lightning rod for both hope and anxiety – promised as solutions for global challenges like disease, yet feared as tools that will displace creative professionals.

Yet, beneath the glossy veneer of modern AI lies a fundamental problem: Do these systems actually understand anything at all? This isn’t about processing power; it’s about whether the intelligence we witness is genuine comprehension or merely an elaborate pantomime.

The Illusion of Artificial Intelligence Understanding: From Searle to ‘Stochastic Parrots’

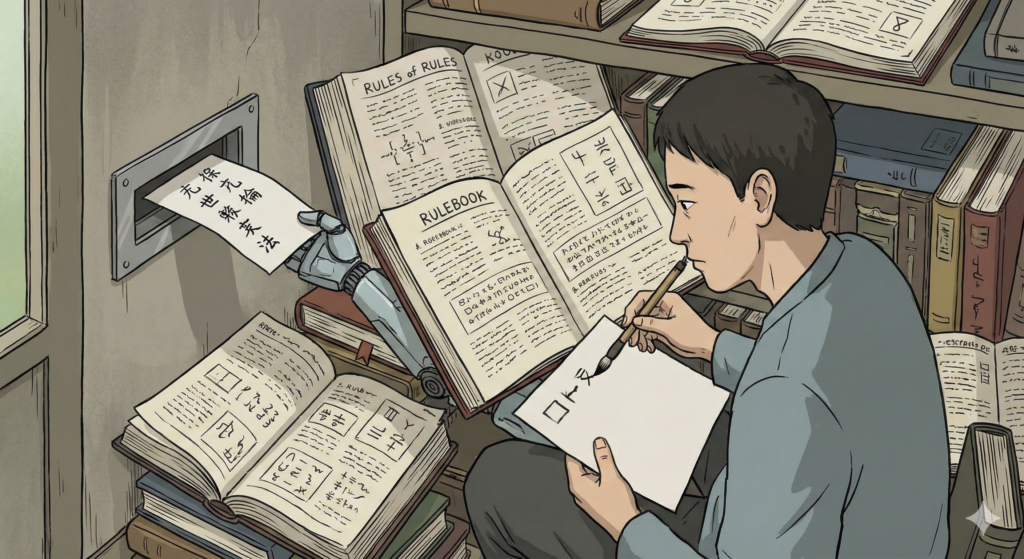

To understand this problem, we must revisit John Searle’s Chinese Room thought experiment (1984). Imagine a person in a sealed room who uses a rulebook to output Chinese symbols in response to inputs. To an outsider, they seem fluent. In reality, they are simply manipulating symbols without understanding a single word.

Modern LLMs function similarly. They are extraordinarily skilled at identifying statistical patterns between words—a concept often described as being “Stochastic Parrots”. They predict the next most probable token based on billions of examples, but they don’t “know” things the way humans do.

A Real-World Example of the Gap:

An LLM can perfectly explain the laws of physics or the properties of glass. However, if you ask it a complex spatial reasoning puzzle – such as how to stack a laptop, an egg, and a nail – it may struggle, whereas a child using a “world model” would immediately understand the physical consequences of the arrangement.

Can AI Truly Understand Language? Syntax vs. Semantics Explained

In philosophy, a Philosophical Zombie is a being physically identical to a human but lacking inner conscious experience. Current AI systems are the digital version of this:

- They can describe the “searing pain of loss” without ever feeling pain.

- They can generate a sunset description without ever seeing light.

- They understand that certain words cluster together, but they lack the semantic grounding that comes from physical or social experience.

Mastery of syntax (the rules and structure of language) is not the same as mastery of semantics (the meaning behind it). Without a true internal framework, the AI remains a master of simulation.

Deep Dive: For a more technical look at how LLMs operate without a physical or mental experience of the world, read our post on The whole world is a model, and LLM is the backend in it.

Testing True Artificial Intelligence Understanding: Beyond the Turing Test

The Turing Test is increasingly obsolete. We need new standards to distinguish genuine AGI from advanced mimicry:

1. The Unexpectedness (Surprise) Test

Can the system produce insights that transcend logical extrapolation from its training data? True creativity isn’t just recombining patterns; it’s the ability to introduce novel, “sideways” thinking that suggests internal processing rather than mechanical manipulation.

2. The Emergence Test

Does the system develop its own internal framework of values and beliefs that evolve independently of its training? Detecting authentic background beliefs – rather than those generated to satisfy a user—is difficult but essential for proving genuine cognition.

3. The Autonomous Goals Test

Is the system capable of formulating its own long-term objectives? We are currently seeing the rise of Agentic AI – tools designed to complete complex tasks. However, these still serve external agendas. A true mind possesses self-determined purpose: the ability to say “I want X” rather than “I will do Y for you”.

The Uncomfortable Truth

We may be no closer to creating genuine consciousness than we were decades ago. We have mastered the manipulation of text that mimics intelligent behavior, but mimicry is not intelligence.

As we continue to develop increasingly powerful AI and LLM solutions, we must remain cautious about attributing true understanding to these systems. The age of artificial intelligence is here, but the age of artificial understanding remains a distant frontier.

What do you think?

Are we building minds, or just more convincing “zombies”?

If you want to explore how today’s AI systems can be applied in your product while staying realistic about their limitations, our team can help you design AI/ML and LLM solutions that create real value.

📖 This article is part of our complete AI & Machine Learning Development Guide — explore deep learning, neural network architectures, and the future of AI development.

About The Author: Yotec Team

More posts by Yotec Team