In 2026, enterprises are moving beyond single large language models toward enterprise agentic AI: networks of autonomous AI agents that can plan, act, and learn within well-defined constraints. This enterprise agentic AI approach lets organizations orchestrate multiple specialized models (often small language models) to handle complex workflows such as customer onboarding, claims processing, or software delivery at scale.

At Yotec, we see this shift daily when modernizing AI stacks for clients in the EU, UK, and North America, where compliance, latency, and cost are as important as accuracy. In this guide, we explain what enterprise agentic AI is, why small language models matter, and how to take an existing AI initiative into production-ready agentic workflows. For a broader overview of AI project lifecycles, best practices, and technology choices, see our main AI & Machine Learning Development Guide.

What is enterprise agentic AI?

Agentic AI describes systems where AI components do not just respond to prompts but plan sequences of actions, call tools, and adapt based on feedback. In an enterprise context, these capabilities are wrapped with observability, guardrails, and governance so they remain safe, auditable, and aligned with business processes. If you want a short, non-technical primer, this overview of agentic AI trends for 2026 is a helpful starting point.

Key characteristics of enterprise-grade agentic AI include:

- Goal-driven behavior: agents receive high-level objectives and decompose them into tasks (for example, “prepare a quarterly revenue report from CRM and ERP”).

- Tool usage: agents call APIs, databases, RPA bots, or internal microservices to collect data or execute actions.

- Collaboration and delegation: “manager” agents break work into subtasks for “specialist” agents (researcher, coder, analyst, reviewer).

- Feedback loops: agents evaluate outputs, request corrections, or escalate to humans in the loop.

For CIOs and CTOs, the value is not just in automation but in building reusable agent workflows that can be monitored, tested, and improved over time. For more background on transforming enterprise workflows with AI, MuleSoft’s perspective on AI and integration trends is a useful reference.

Designed correctly, enterprise agentic AI becomes a reusable automation layer that sits on top of your data and systems, rather than a one-off chatbot experiment.

Why small language models (SLMs) are critical for enterprise agentic AI

While foundation LLMs still matter, many 2026 architectures pair them with small language models tuned for specific domains and deployed closer to where data lives. Guides such as HCLTech’s overview of small language models for enterprise AI summarize why SLMs are becoming a default building block.

Benefits of SLMs in agentic workflows:

- Lower latency: SLMs running on edge servers or private clouds respond faster, which is crucial for interactive agents in support or trading.

- Better data control: organizations can deploy SLMs inside their own VPCs to keep PII and sensitive logs off public infrastructure.

- Domain specialization: SLMs fine-tuned on your domain (policy documents, codebase, medical guidelines) often outperform generic LLMs on narrow tasks.

- Cost efficiency: high-volume workloads (search, routing, classification) can run on SLMs, while expensive LLMs are reserved for truly complex tasks.

A typical pattern we implement is to use an SLM for fast “triage” and routing, and escalate to a larger model only when necessary. For more detail on this pattern, the enterprise guide to SLMs and edge AI provides useful architectural examples.

If you are still at the model-selection stage, you may want to revisit the model section in our AI & Machine Learning Development Guide to align SLM choices with your broader AI strategy.

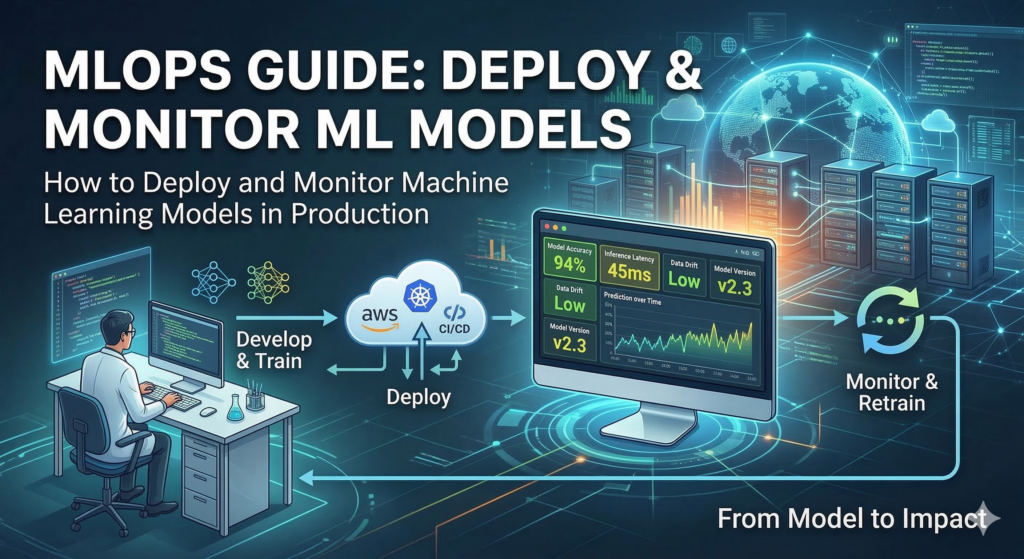

Architecture of a production-ready agentic AI system

A robust enterprise implementation usually includes these layers:

- Experience layer (channels and UX)

- Web applications, internal tools (e.g., Salesforce, Jira, ServiceNow), chat widgets, or mobile apps.

- Authentication via SSO and role-based access control.

- Agent orchestration layer

- An orchestrator or “brain” coordinates multiple agents with different roles and skills.

- Uses frameworks or platforms that support workflows, memory, and tool integrations. For a trends overview, see summaries of AI agent platforms and tools.

- Model layer (LLMs and SLMs)

- Mix of vendor LLMs (for general reasoning) and self-hosted SLMs (for domain-specific tasks, compliant processing).

- Policy layers to enforce prompt and output controls.

- Tooling and integration layer

- Connectors to CRMs, ERPs, data warehouses, APIs, and RPA systems so agents can act, not just read.

- Event-driven patterns (queues, pub/sub) to keep workflows scalable.

- Observability, governance, and safety

- Central logging, metrics, and traces for agent behavior.

- Guardrails for PII redaction, toxicity filters, and role-based policies.

- Human review queues for high-risk actions (payments, contracts, medical decisions).

If you are designing this architecture from scratch, you can reuse many of the principles from the “Productionizing AI & ML systems” section of our AI & Machine Learning Development Guide, applying them specifically to agentic workflows.

High-impact use cases by industry

Below are some agentic AI use cases we see moving into production in 2025–2026. For more examples of how autonomous agents are reshaping industries, this article offers useful cross-industry patterns.

Financial services

- Autonomous KYC/AML data collection, risk scoring, and investigation summaries

Insurance

- Claims intake, document understanding, and triage across channels

E‑commerce & retail

- Product enrichment, pricing suggestions, and multi-channel campaign orchestration

Healthcare

- Care-pathway suggestions, coding assistance, and clinical documentation drafting

Manufacturing

- Predictive maintenance workflows and incident triage across plants

Software & IT

- AI agents for code generation, testing, and incident response runbooks

In each scenario, success depends less on “which model” and more on how well the agents are embedded into existing systems, SLAs, and human roles. If you want a deeper dive into AI use cases by sector, review the industry sections in our main AI services content at Implementation roadmap: from PoC to production

If you already have a chatbot or LLM PoC, you can evolve it into a full agentic system with these stages. The high-level steps mirror the phases described in our AI & Machine Learning Development Guide but focus specifically on agent orchestration and SLMs.

- Assess and prioritize workflows

- Identify processes with high manual effort and clear KPIs (time-to-resolution, FTE hours, error rate).

- Validate regulatory constraints (e.g., GDPR, HIPAA) per region before choosing hosting options.

- Design your agent topology

- Define a small set of agent roles: orchestrator, researcher, data collector, summarizer, reviewer.

- Map each role to tools and permissions, not just prompts.

- Select models and hosting strategy

- Choose which tasks can run on SLMs versus which need a larger LLM; design for a “right model for the right task” strategy.

- Decide between cloud, on-prem, or hybrid, taking into account data residency for EU or region-specific regulations. For additional perspective on SLM deployment models, see this guide.

- Integrate with core systems

- Implement secure connectors and service accounts for CRM, ERP, ticketing, and data warehouses.

- Introduce feature flags so you can roll out to specific departments or markets gradually.

- Build guardrails and monitoring from day one

- Add content filters, PII detection, and allow/deny lists for tools and actions.

- Instrument agent runs with tracing and feedback collection to continuously improve prompts and policies. Google Cloud’s summary of AI agent trends highlights why observability is now a baseline requirement.

- Pilot, iterate, then scale

- Start with one or two departments (for example, a single support queue or a regional sales team).

- After measurable success, replicate the pattern across geographies and business units.

At Yotec, we typically combine this roadmap with our existing AI/ML development framework to ensure each agentic deployment remains maintainable, testable, and auditable. If you are evaluating vendors or platforms, reports such as Anthropic’s 2026 agentic trends overview can also help you benchmark approaches.

GEO-readiness and compliance considerations

When deploying agentic AI systems globally, you need to design for GEO-aware behavior from day one:

- Data residency: ensure logs and embeddings for EU, UK, or MENA customers stay within compliant regions.

- Localization: tune prompts, SLMs, and evaluation datasets for local languages (for example, German, Arabic, Polish) and regulatory terminology.

- Policy variation: some tools and actions should be enabled or disabled by region (for instance, access to particular datasets or external APIs).

- Local governance: align with frameworks such as the EU AI Act, sector-specific regulators, and internal risk committees.

For a higher-level view of regulatory trends affecting AI deployments, our AI & Machine Learning Development Guide includes a section on compliance and risk that you can reuse as a checklist when rolling out agentic AI across regions.

How Yotec can help

If your organization is ready to move beyond experimental chatbots and into production-grade agentic AI, Yotec can help with:

- Architecture and roadmap design for agentic AI and SLM-based systems.

- Selecting and integrating the right mix of LLMs and SLMs for your use cases and regions.

- Implementing secure integrations, observability, and AI governance aligned with your compliance needs.

You can continue exploring advanced topics in our AI & Machine Learning Development Guide:

https://www.yotec.net/ai-machine-learning-development-guide/

You can also review our dedicated AI development services page to see how we structure end-to-end engagements:

https://www.yotec.net/services/ai-software-development/

When you are ready to discuss an agentic AI roadmap for your organization, get in touch via the Yotec contact page:

https://www.yotec.net/contact/

About The Author: Yotec Team

More posts by Yotec Team