In this article, we will talk about one non-obvious issue when working with TensorFlow and Keras – the simultaneous loading and execution of several models. If you are not familiar with how TensorFlow and Keras work internally, this topic can be a problem for beginners.

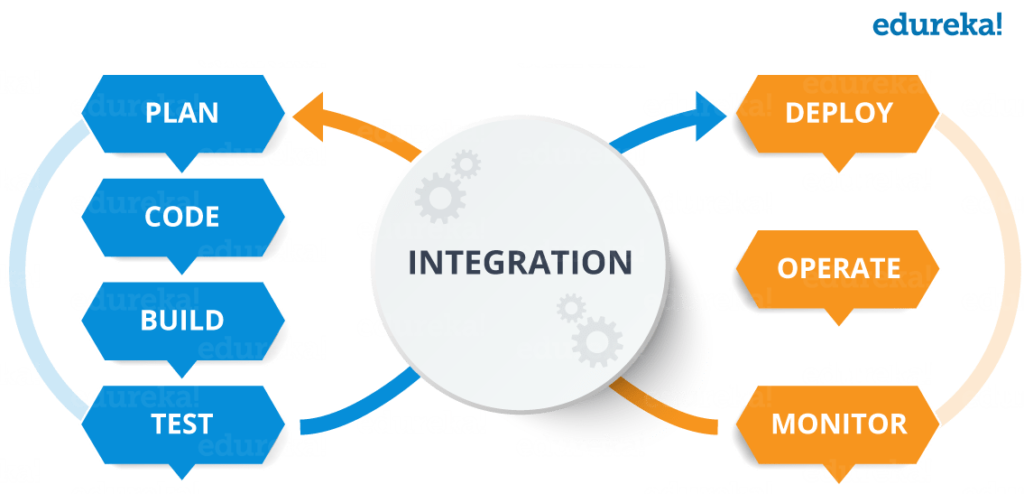

TensorFlow presents the calculations in the neural network model in memory as a graph of dependencies between operations during initialization. When executing the model, TensorFlow performs calculations on the graph within a specific session. I will not go into details of these entities in Tensorflow.

Error Tensor("norm_layer/l2_normalize:0", shape=(?, 128), dtype=float32) is not an element of this graphThe reason for the error is that Keras by default only works with the default session and does not register the new session as the default session.

When working with the Keras model, the user must explicitly set the new session as the default session. This can be done like this:

self.graph = tf.Graph()

with self.graph.as_default():

self.session = tf.Session(graph=self.graph)

with self.session.as_default():

self.model = WideResNet(face_size, depth=depth, k=width)()

model_dir = <model_path>

...

self.model.load_weights(fpath)

We create a new TensorFlow Graph and Session and load the model inside the new TensorFlow session.

Line

with self.graph.as_default():means we want to use the new graph () as the default graph and in the row

with self.session.as_default():we indicate that we want to use the self.session as the default session and execute the subsequent code within this session. The with construct creates a context manager that allows you to efficiently work with memory when dealing with resource-intensive objects (for example, reading files), since it automatically frees up resources when you exit the with block.

When we need to fulfill the prediction we do it like this:

with self.graph.as_default():

with self.session.as_default():

result = self.model.predict(np.expand_dims(img, axis=0), batch_size=1)

We just call the predict () method inside the TF session created earlier.

That’s all for now. Good luck to everyone and see you soon!

About The Author: Yotec Team

More posts by Yotec Team